Long time back at the wake of the release of Windows Phone 7 (WP7) I posted about the Windows Phone 7 series programming model. I also published how .NET Compact framework powered the applications on WP7.

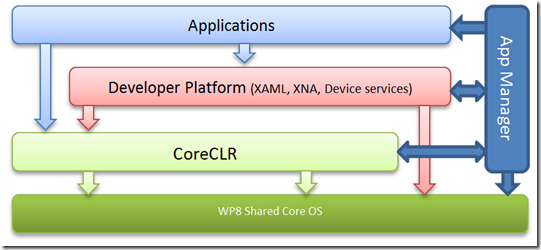

Further simplifying the block diagram, we can think of the entire WP7 application system as follows

As with most block diagrams, this is gross simplifications. However, I hope it helps to easily picture the entire system.

Essentially the application can be purely managed (written in say C# or VB.net). The application can only utilize services exposed by the developer platform and core services provided by .NET Compact Framework. The application can in no way directly use native code or talk to the OS (say call an Win32 API). It has to always go through the runtime infrastructure and is in a security sandbox.

The application manager is the loose term I am using to encompass everything that is used to managed the application including the host.

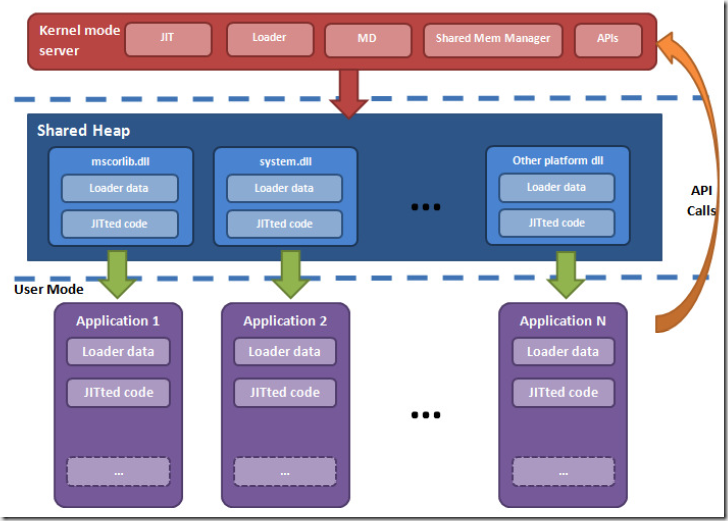

Windows Phone 8 (WP8) is a huge huge change from Windows Phone 7.x (WP7). From the perspective of a WP7 application running on a WP8 device the system looks as follows

Everything in Green in this diagram got outright replaced with entire new codebase and the rest of the system other than the application was heavily modified to work with the new OS and the new managed runtime.

Shared Windows Core

The OS moved away from Windows Compact Embedded (WinCE) OS core that was used in WP7 to a new OS which shares it’s core with the desktop Windows 8. This means that a bunch of things in the WP8 OS is shared with the desktop implementation, this includes things like kernel, networking, driver framework and others. The shared core obviously brings great value as innovations and features will more easily flow across the two form factors (device and desktop) and also reduce engineering redundancy on Microsoft side. Some of the benefits are readily visible today like great multi-core support, WinRT interop and others are more subtle.

CoreCLR

.NET Compact Framework (NETCF) that was used in WP7 has a very different design philosophy and hence a completely different implementation from the desktop .NET. I will have a follow up post on this but for now it suffices to note that .NETCF is a very portable runtime that is designed to be very versatile and cross platform. Desktop CLR on the other hand is more closely tied with Windows and the processor architecture. It closely works with the OS and the underlying HW to give the maximum performance benefit to managed code running on it.

With the new Windows RT which works on ARM, desktop CLR was anyway updated to work on the ARM processor. So when the phone chose to move to shared core it was an obvious choice to move the CLR as well. This gave the same benefits of shared innovation and reduced engineering redundancy.

The full desktop CLR is more heavy and provides functionality that is not really required by the phone scenarios and hence a lighter variant of it (which is built from the same source) called CoreCLR was chosen for WP8. CoreCLR is the evolution of the lightweight runtime that powered Silverlight. With the move to CoreCLR developers get a much faster runtime with extended feature set that includes interop via WinRT.

Backward Compat

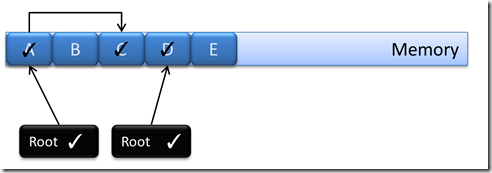

One of the simple statements made during all of these WP8 launch presentations was that applications in the store built for WP7 will work as is for WP8. This is a small statement but is a huge achievement and effort from the runtime implementation perspective. Making apps work as is when the entire runtime, OS and chipset has changed is non-trivial to say the least. Our team worked very hard to make this possible.

One of the biggest things that played out to our benefit was that the WP7 apps were fully sandboxed and couldn’t use any native code. This means that they didn’t have any means of taking behavioral dependence on the OS APIs or underlying HW. The OS APIs were used via the CLR and it could always add quirks to expose consistent behavior to the applications.

API Compatibility

This required us to ensure that CoreCLR exposes the same API set as NETCF. We ran various automated tools to managed API surface area changes and retain meaningful API compat between WP7 and WP8. With a closed application store it was possible for us to gather complete metrics on API usage and correctly prioritize engineering resources to ensure that majority of applications continued to see the same API set in signature and semantics.

We also needed to ensure that the same APIs behave as closely as possible with that provided by NETCF. We tested a lot of applications in the app store to get as close as we can and believe that we are at a place that should allow most WP7 application’s API usage to transparently fall over to the new runtime.

Runtime behavior changes

When a runtime changes there are behavioral changes that can expose pre-existing issues with applications. This includes things like timing differences. Even though these runtime behaviors are not documented, or in some cases especially called out that user code should not take dependence on them, some apps still did do that.

Couple of the examples we saw

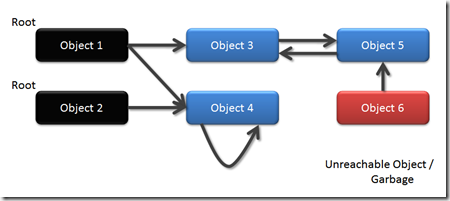

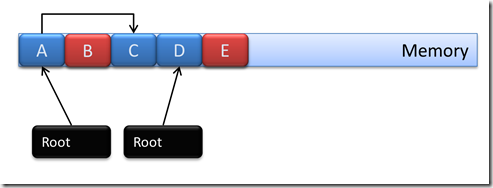

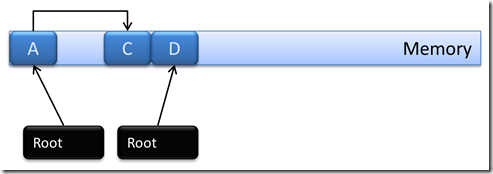

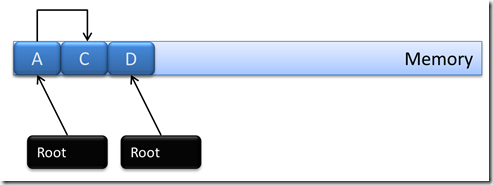

- Taking dependence on finalization order

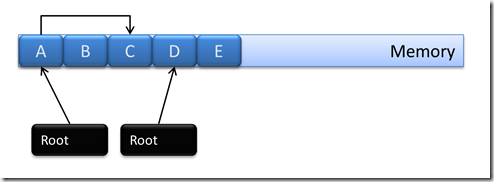

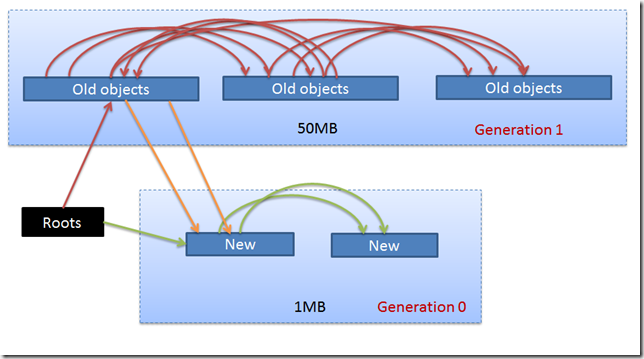

Even though CLI specification clearly calls out that finalization order in .NET is not deterministic, code still took subtle dependency on them. In one particular case object F1 used a file and it’s finalizer released it. Later another object F2 opens the same file. With change in the runtime, the timing of GC changed so that at the time F2 tried to open the file, F1 which has been already collected hasn’t yet had its finalizer run. Hence the application crashed. We got in touch with the app developer and got the code moved to use the right dispose pattern/ - GC Timing and changes in number of generations

Application finalizers contrary to .NET guidelines modified other managed objects. Now changed GC timings and generation resulted in those objects to have already been collected and hence resulted in finalizer crashes - Threading issues

This was one of the painful ones. With the change in OS and hence thread scheduler and addition of multiple cores in the ARM CPU, a lot of subtle races and deadlocks in the applications got exposed. These were pre-existing issues where synchronization primitives were not used correctly or some code relied on the quanta based thread scheduling WinCE did. With move to WP8, threads run in parallel on different cores and scheduled in different order, this lead to various deadlocks and race driven crashes. Again we had to get in touch with app developer to address these issues - There were other cases where exact precision of floating point math was relied on. This resulted in a board game where pucks flew off from its surface

- Whether or not functions got inlined

- Order of static constructor initialization (especially in conjunction of function in lining)

We addressed some of these issues where it was realistic to fix them in the runtime. For some of the others we got in touch with the application developers. Obviously all applications in the store and all of their features were not tested. So you should try to test your applications when you have access to WP8.

At one point we were all playing games on our phones telling our managers that I am compat testing Need For Speed and I need to test till level 10 :)