In this post I attempt to separate the hype as well as the nay-sayers with what I am actually experiencing and seeing around me.

There is a well-worn observation that we tend to overestimate the short-term impact of new technology and underestimate the long-term impact. AI fits this pattern almost perfectly.

On one end, you have tech CEOs claiming all white-collar work is about to disappear. On the other, you have experienced engineers who tried ChatGPT once a many months ago and concluded it is a toy. Both are wrong, but for different reasons.

Why the Hype Exists

The bold claims are not accidental. When you need to raise tens of billions of dollars to build GPU datacenters, nuanced messages do not get the job done. "AI will meaningfully improve the productivity of senior engineers over the next few years" does not unlock capital markets. "AI will replace all software engineers" does.

Similarly, the news cycle swings between "vibe coding can build anything" and "vibe coding caused a massive outage." Neither framing is particularly useful if you are trying to make real decisions about how to staff and run your teams.

Why the Skepticism Exists

When I talk to friends and former colleagues outside the large tech companies, I notice a consistent gap. Many of them tried AI tools months ago, maybe with GPT-3.5 or an early Copilot version. Their company does not provide access to frontier models like Claude Opus or GPT-5.4, let alone unlimited tokens. They tried it, it felt mediocre, and they moved on.

The pace of improvement has been so fast that the tool they evaluated six months ago bears little resemblance to what is available today. And if keeping up with AI is not your primary job, you simply cannot track all of it. Most have never experienced agentic workflows, SRE agents, SWE agents, or team-shared skills that give these models custom context for your specific codebase and organization.

What Is Actually Happening

Here is what I see when I talk to principal engineers and senior leaders who have been using these tools seriously: their day-to-day engineering work has shifted completely over the last few months.

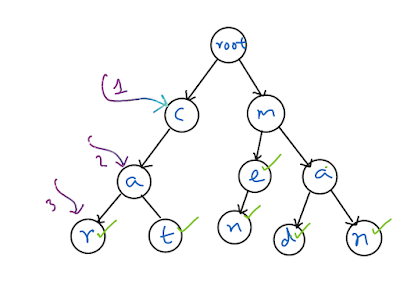

In the earlier model, a senior engineer would write a design, sometimes a very detailed specification, hand it to an early-career engineer, wait for the code, review it, iterate, and eventually accept it. That loop could take days or weeks.

Now, those same senior engineers are directing AI agents to do much of that execution work. They provide the design intent, the constraints, the tradeoffs. The agent produces the code. The senior engineer reviews, adjusts, and ships. The cycle compresses dramatically.

The engineers who understand systems deeply, who know the principles of distributed computing, who can reason about tradeoffs across reliability, performance, cost, and security: those engineers are becoming genuinely 10x productive. Not because the AI is magic, but because it removes the bottleneck of translating their knowledge into code line by line. At the same time in this scenario the AI is not going off the rails doing stuff, but rather this is agents with humans in the middle. Imagine the agents work as early career even interns who do specific things inside the bound and then it gets reviews and put into production.

Where the Impact Starts

The uncomfortable truth is that this shift starts at the bottom of the experience ladder. Entry-level engineering roles are the first to feel the pressure. Not because those engineers are not talented, but because the work they typically do, taking a well-defined spec and implementing it, is exactly what AI agents are getting good at.

For the foreseeable future, we will need experienced engineers to direct these agents. You still need someone who knows what good looks like. But instead of that person supervising three or four junior engineers, they are now supervising a set of AI agents. The volume of output goes up, and over time, the agents will mature and move up the complexity ladder as well.

Beyond Software Engineering

This is not limited to code. If someone's job involves looking at data on a screen, making a decision based on patterns, and taking an action on a computer, that role is already under pressure. It may be held in place temporarily by legal constraints, organizational inertia, or government regulation. But if the generic frontier models do not disrupt it today, a startup training a specialized model for that exact problem will.

The framing I find most useful is not "will this role disappear entirely?" but rather "can one experienced person with AI agents do the work of a small team?" In many cases, the answer is already yes.

The Cost Objection

I hear this frequently: "Sure, this works at Microsoft scale, but we cannot afford unlimited tokens and frontier models." That is a fair point today. But the history of compute costs in this industry is unambiguous. Prices drop exponentially. What is expensive today becomes commodity tomorrow. The organizations that wait for costs to drop before even experimenting will find themselves behind the ones that built the muscle early.

The Honest Takeaway

AI is not replacing all engineers. It is not replacing all white-collar workers. Those are fundraising narratives, not operational realities.

But it is genuinely changing the ratio. Teams that once needed eight engineers to deliver a project can do it with three experienced ones and a set of well-directed agents. That is not hype. That is what I am seeing, month after month.

The engineers who will thrive are the ones who understand systems, who can articulate what good looks like, and who learn to work with these tools as a force multiplier. The ones most at risk are those whose primary value was the mechanical translation of someone else's design into working code.

That is the real shift, and it is already here.