One of the pain points we face with remote development is having to go through few extra hops to get to our virtual dev boxes. Many of us uses Azure VMs for development (in addition to local machines) and our security policy is to lock down all VMs to our Microsoft corporate network.

So to ssh or rdp into an Azure VM for development, we first connect over VPN to corporate network, then use a corpnet machine to then login to the VMs. That is painful and more so now when we are working remotely.

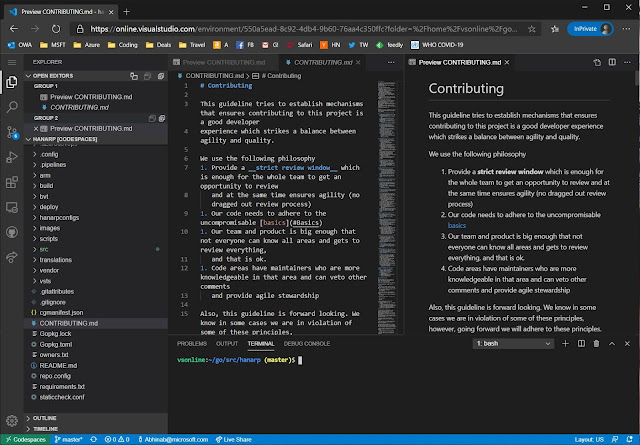

This is where the newly announced Visual Studio Codespaces come in. Basically it is a hosted vscode in the cloud. It runs beautifully inside a browser and best of all comes with full access to the linux shell underneath. Since it is run as a service and secured by the Microsoft team building it, we can simply use it from a browser on any machine (obviously over two-factor authentication).

At the time of writing this post, the cost is around $0.17 per hour for 4 core/8GB which brings the price to around $122 max for the whole month. Codespaces also has a snooze feature. I use snooze after one hour of no usage. This does mean additional startup time when you next login, but saves even more money. In between snooze the state of the box is retained.

While just being able to use the IDE on our code base is cool in itself, having access to the shell underneath is even cooler. Hit Ctrl+` in vscode to bring up the terminal window.

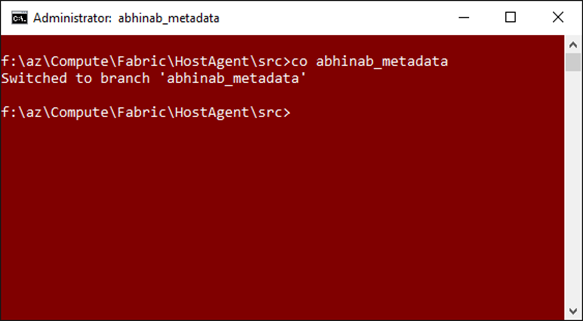

I then sync'd my linux development environment from https://github.com/abhinababasu/share, installed required packages that I need. Finally I have a full shell and IDE in the cloud, just the way I want it.

To try out Codespaces head to https://aka.ms/vso-login