I just returned home from a trip and had one of those dreaded moments: one of the disks on my home desktop had failed! Thankfully, I have a backup strategy in place that is working for me and it is the second time I had to use it.

How I Choose What to Back Up

This experience reinforced my belief in keeping things simple. I categorize my data as:

Important: Family photos, essential documents, critical projects.

Not Important: Old files, random downloads, stuff that doesn’t need my attention.

Less clutter ensures lower cost to backup, easier to backup and faster restore. I really care ensuring multiple backups including remote backups of a few critical folders, everything else, I can delete at will and never worry.

Staying Cost-Effective

Finding affordable yet effective backup options has always been my goal. No one wants to spend money unnecessarily on backups that provide minimal additional benefits.

My Backup Philosophy: The 3-2-1 Rule

I follow the popular 3-2-1 backup rule:

3 copies of all important data.

2 different storage types (local NAS and cloud).

1 off-site backup for worst-case scenarios (think fires or theft).

My Actual Backup Setup

Here's the practical side of my setup:

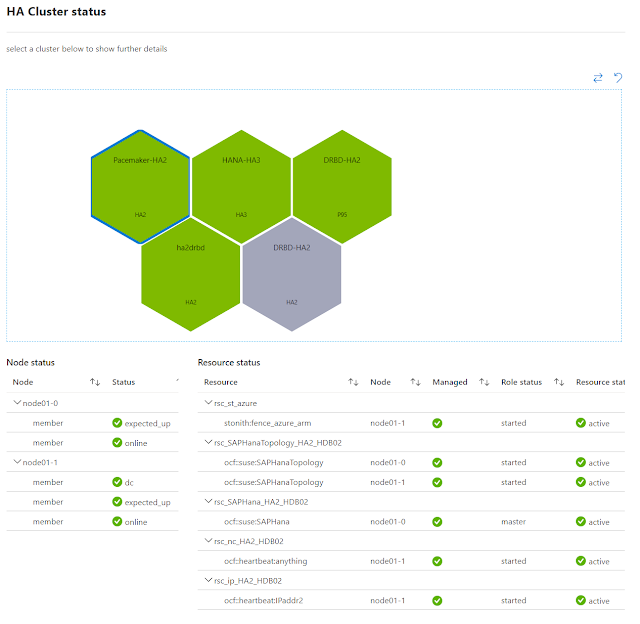

Local NAS: Quick, reliable backups. All my devices constantly backup their entire contents here, which allowed me to quickly recover my desktops data this time. I treat is as a local cache. I am using a Synology DS2xx series for some time now.

Cloud Storage (OneDrive): Essential files from laptops, desktop, and my phone (including camera) sync automatically. This ensures easy access from anywhere. OneDrive is an easy choice for me. The family tier is 1TB per person, and this alone covers for everything critical for me except the huge cache of raw images (see below).

Cloud Backup (Backblaze): If not for my huge collection of RAW images from my DSLR, I'd likely have just stuck to the above two. However, my desktop has few terrabytes of images, that I simply cannot fit in onedrive, hence my desktop backs up fully (all drives) to BackBlaze against serious emergencies. I have been using BackBlaze for years. I use their 30 day versioning and have in the past had to get a restore, where they sent me a SSD with the folders I wanted to restore. BackBlaze allows you to give an encryption key that is used in the client and not kept with backblaze. So if you loose that key, all data is lost.

Backup is Easy, but Restore is Crucial

Backing up data is easy—restoring it reliably when something goes wrong is where the real test lies. Every six months, I run a mini disaster recovery drill:

Checking that backups are up-to-date.

Restoring a few random files to verify everything works.

This practice made my recent restore straightforward and stress-free.